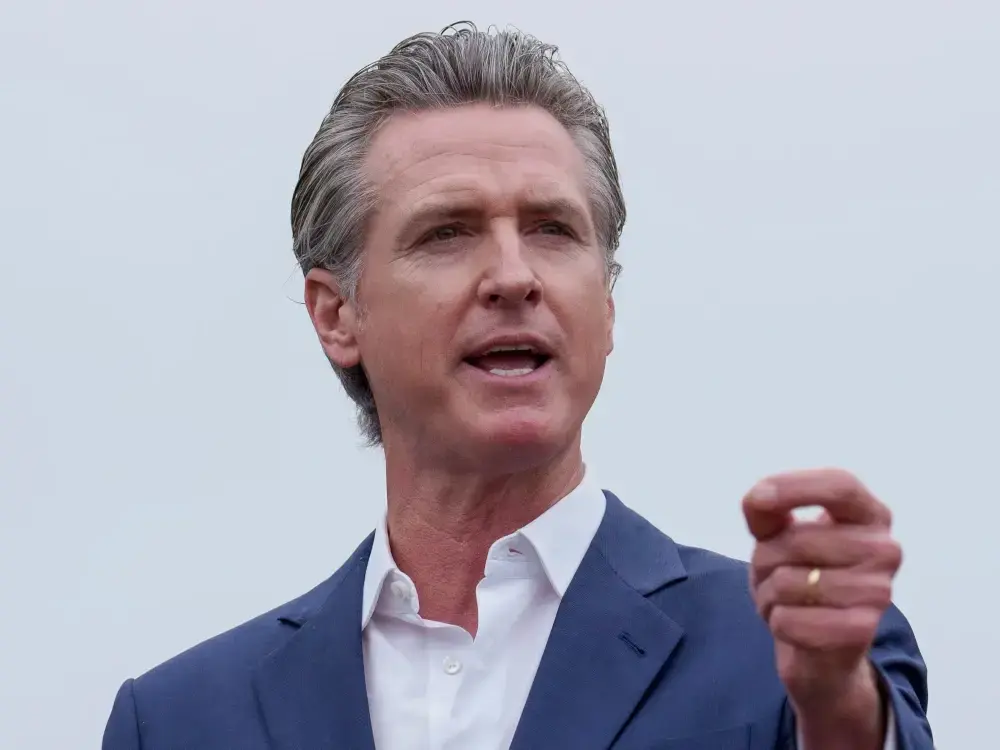

The AI bill Newsom didn’t veto — AI devs must list models’ training data

The AI bill Newsom didn’t veto — AI devs must list models’ training data

pivot-to-ai.com

The AI bill Newsom didn’t veto — AI devs must list models’ training data

The AI bill Newsom didn’t veto — AI devs must list models’ training data

The AI bill Newsom didn’t veto — AI devs must list models’ training data