I had never thought about any of this before, but it actually makes perfect sense.

By its nature, an LLM feeds back some statistically close approximation of what you expect to see, and the more you engage with it (which is to say, the more you refine your prompts for it) the closer it necessarily gets to precisely what you expect to see.

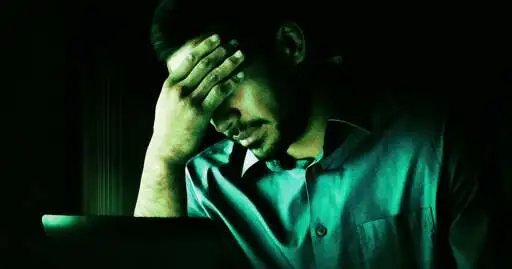

"He was like, 'just talk to [ChatGPT]. You'll see what I'm talking about,'" his wife recalled. "And every time I'm looking at what's going on the screen, it just sounds like a bunch of affirming, sycophantic bullsh*t."

Exactly. To an outside observer, that's likely what it would look like, because in some sense, that's exactly what it in fact is.

But to the person engaging with it, it's a revelation of the deep, secret, hidden truths that they always sort of suspected lurked at the heart of reality. Never mind that the LLM is just stringing together words and phrases most statistically likely to correspond with the prompts it's been given - to the person feeding it those prompts, it seems like, at long last, verification of what they've always suspected.

I can totally see how people could get sucked in by that