This is the technology worth trillions of dollars huh

This is the technology worth trillions of dollars huh

This is the technology worth trillions of dollars huh

This is the technology worth trillions of dollars huh

This is the technology worth trillions of dollars huh

They took money away from cancer research programs to fund this.

i rather manually search for info

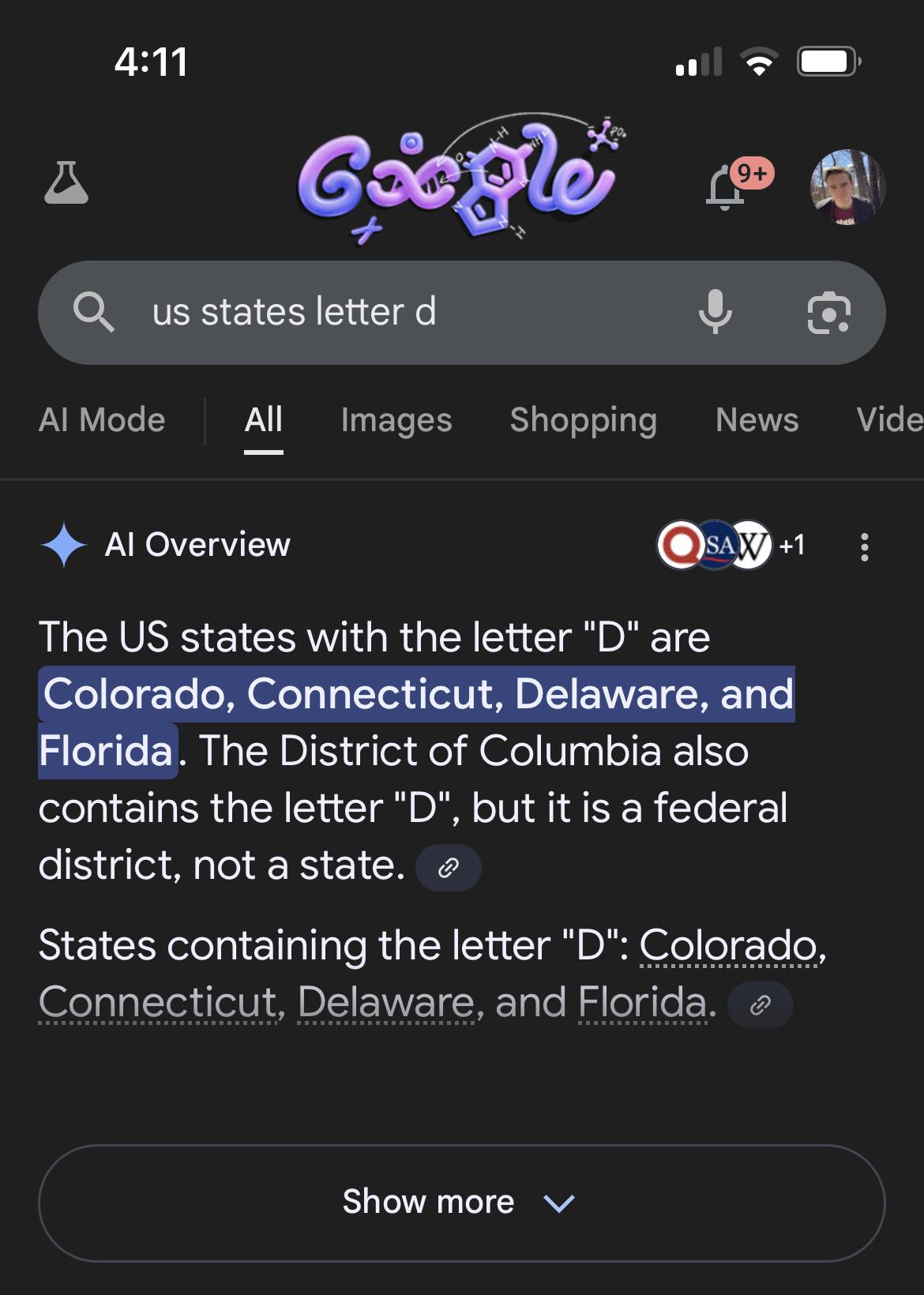

✅ Colorado

✅ Connedicut

✅ Delaware

❌ District of Columbia (on a technicality)

✅ Florida

But not

❌ I'aho

❌ Iniana

❌ Marylan

❌ Nevaa

❌ North Akota

❌ Rhoe Islan

❌ South Akota

Gosh tier comment.

You just described most of my post history.

Everyone knows it's properly spelled "I, the ho" not Idaho. That’s why it didn’t make the list.

Connedicut.

I wondered if this has been fixed. Not only has it not, the AI has added Nebraska.

I would assume it uses a different random seed for every query. Probably fixed sometimes, not fixed other times.

What about Our Kansas? Cause according to Google Arkansas has one o in it. Refreshing the page changes the answer though.

Just checked, it sure does say that! AI spouting nonsense is nothing new, but it's pretty ironic that a large language model can't even parse what letters are in a word.

Blows my mind people pay money for wrong answers.

Listen, we just have to boil the ocean five more times.

Then it will hallucinate slightly less.

Or more. There’s no way to be sure since it’s probabilistic.

If you want to get irate about energy usage, shut off your HVAC and open the windows.

Worthless comment.

Well, for anyone who knows a bit about how LLMs work, it’s pretty obvious why LLMs struggle with identifying the letters in the words

Well go on..

They don't look at it letter by letter but in tokens, which are automatically generated separately based on occurrence. So while 'z' could be it's own token, 'ne' or even 'the' could be treated as a single token vector. of course, 'e' would still be a separate token when it occurs in isolation. You could even have 'le' and 'let' as separate tokens, afaik. And each token is just a vector of numbers, like 300 or 1000 numbers that represent that token in a vector space. So 'de' and 'e' could be completely different and dissimilar vectors.

so 'delaware' could look to an llm more like de-la-w-are or similar.

of course you could train it to figure out letter counts based on those tokens with a lot of training data, though that could lower performance on other tasks and counting letters just isn't that important, i guess, compared to other stuff

I get the sentiment behind this post, and it's almost always funny when LLM are such dumbass. But this is not a good argument against the technology. It is akin to climate change denier using the argument: "look! It snowed today, climate change is so dumb huh ?"

You do know that AI is (if not already) fast approaching a leading CAUSE of climate change?

While the environmental impact of AI is absolutely horrible I don't think it is even in the top 10 of industries. Meat production, Transportation by cars, Airplanes, plastic products etc are all much worse.

The problem is AI is absolutely useless for how big its climate impact is. The other industries at least provide value.

It’s not worth the environmental impact

AI writes code for me. It makes dumbass mistakes that compilers automatically catch. It takes three or four rounds to correct a lot of random problems that crop up. Above all else, it's got limited capacity - projects beyond a couple thousand lines of code have to be carefully structured and spoonfed to it - a lot like working with junior developers. However: it's significantly faster than Googling for the information needed to write the code like I have been doing for the last 20 years, it does produce good sample code (if you give it good prompts), and it's way less frustrating and slow to work with than a room full of junior developers.

That's not saying we fire the junior developers, just that their learning specializations will probably be very different from the ones I was learning 20 years ago, just as those were very different than the ones programmers used 40 and 60 years ago.

I agree, cursor and other IDE integration have been a game changer. It made it way easier for a certain range of problems we used to have in software dev. And for every easy code, like prototyping, or inconsequential testing, it's so so fast. What I found is that, it is particularly efficient at helping you do stuff you would have been able to do alone, and are able to check once it's done. Need to be careful when asking stuff you aren't familiar with though, cause it will comfortably lead you toward a mistake that will waste your time.

Though one thing I have to say: I'm very annoyed by it's constant agreeing with what I say, and enabling me when I'm doing dumb shit. I wish it would challenge me more and tell me when I'm an idiot.

"Yes you are totally right", "This is a very common issue that everybody has", "What a great and insightful question"...... I'm so tired of this BS.

"This is the technology worth trillions of dollars"

You can make anything fly high in the sky with enough helium, just not for long.

(Welcome to the present day Tech Stock Market)

Bubbles and crashes aren't a bug in the financial markets, they're a feature. There are whole legions of investors and analysts who depend on them. Also, they have been a feature of financial markets since anything resembling a financial market was invented.

We're turfing out students by the tens on academic misconduct. They are handing in papers with references that clearly state "generated by Chat GPT". Lazy idiots.

This is why invisible watermarking of AI-generated content is likely to be so effective. Even primitive watermarks like file metadata. It's not hard for anyone with technical knowledge to remove, but the thing with AI-generated content is that anyone who dishonestly uses it when they are not supposed to is probably also too lazy to go through the motions of removing the watermarking.

Couldn't students just generate a paper with ChatGPT, open two windows wide by side and then type it out in a word document?

if you are going to do all that, just do the research and learn something.

Huh that actually does sound like a good use-case of LLMs. Making it easier to weed out cheaters.

Just another trillion, bro.

Just another 1.21 jigawatts of electricity, bro. If we get this new coal plant up and running, it'll be enough.

Behold the most expensive money burner!

Hey look the markov chain showed its biggest weakness (the markov chain)!

In the training data, it could be assumed by output that Connecticut usually follows Colorado in lists of two or more states containing Colorado. There is no other reason for this to occur as far as I know.

Markov Chain based LLMs (I think thats all of them?) are dice-roll systems constrained to probability maps.

Edit: just to add because I don't want anyone crawling up my butt about the oversimplification. Yes. I know. That's not how they work. But when simplified to words so simple a child could understand them, its pretty close.

I was wondering if you'd get similar results for states with the letter R, since there's lots of prior art mentioning these states as either "D" or "R" during elections.

Oh l I was thinking it's because people pronounce it Connedicut

Awe cute!

Yesterday i asked Claude Sonnet what was on my calendar (since they just sent a pop up announcing that feature)

It listed my work meetings on Sunday, so I tried to correct it…

You’re absolutely right - I made an error! September 15th is a Sunday, not a weekend day as I implied. Let me correct that: This Week’s Remaining Schedule: Sunday, September 15

Just today when I asked what’s on my calendar it gave me today and my meetings on the next two thursdays. Not the meetings in between, just thursdays.

Something is off in AI land.

Edit: I asked again: gave me meetings for Thursday’s again. Plus it might think I’m driving in F1

Also, Sunday September 15th is a Monday… I’ve seen so many meeting invites with dates and days that don’t match lately…

Yeah, it said Sunday, I asked if it was sure, then it said I'm right and went back to Sunday.

I assume the training data has the model think it's a different year or something, but this feature is straight up not working at all for me. I don't know if they actually tested this at all.

Sonnet seems to have gotten stupider somehow.

Opus isn't following instructions lately either.

A few weeks ago my Pixel wished me a Happy Birthday when I woke up, and it definitely was not my birthday. Google is definitely letting a shitty LLM write code for it now, but the important thing is they're bypassing human validation.

Stupid. Just stupid.

pixel? ~~have you heard about grapheneOS tho..~~

Connedicut.

Close. We natives pronounce it 'kuh ned eh kit'

So does everyone else

ChatGPT is just as stupid.

it's actually getting dumber.

You joke, but I bet you didn't know that Connecticut contained a "d"

I wonder what other words contain letters we don't know about.

The famous 'invisible D' of Connecticut, my favorite SCP.

That actually sounds like a fun SCP - a word that doesn't seem to contain a letter, but when testing for the presence of that letter using an algorithm that exclusively checks for that presence, it reports the letter is indeed present. Any attempt to check where in the word the letter is, or to get a list of all letters in that word, spuriously fail. Containment could be fun, probably involving amnestics and widespread societal influence, I also wonder if they could create an algorithm for checking letter presence that can be performed by hand without leaking any other information to the person performing it, reproducing the anomaly without computers.

ct -> d is a not-uncommon OCR fuck up. Maybe that's the source of it's garbage data?

SCP-00WTFDoC (lovingly called "where's the fucking D of Connecticut" by the foundation workers, also "what the fuck, doc?")

People think it's safe, because it's "just an invisible D", not even a dick, just the letter D, and it only manifests verbally when someone tries to say "connecticut" or write it down. When you least expect it, everyone heard "Donnedtidut", everyone read that thing and a portal to that fucking place opens and drags you in.

Words are full of mystery! Besides the invisible D, Connecticut has that inaudible C...

The d in Connecticut is between the e and the i. They don't connect because it was cut.

Connecticut is Jewish?

Every American I know does pronounce it like Connedicut 🤔

Really? Everyone I know calls it kinetic-cut. But I group up in new england.

Connedicut

I was going to make a joke if you're from connedicut you never pronounce first d in the word. Conne-icut

Connecticut do have a D in it: mine.

So the Dakotas get a pass

And Idaho

The letters that make up words is a common blind spot for AIs, since they are trained on strings of tokens (roughly words) they don't have a good concept of which letters are inside those words or what order they are in.

It's very funny that you can get ChaptGPT to spell out the word (making each letter an individual token) and still be wrong.

Of course it makes complete sense when you know how LLMs work, but this demo does a very concise job of short-circuiting the cognitive bias that talking machine == thinking machine.

I find it bizarre that people find these obvious cases to prove the tech is worthless. Like saying cars are worthless because they can't go under water.

I find it bizarre that people find these obvious cases to prove the tech is worthless. Like saying cars are worthless because they can't go under water.

This reaction is because conmen are claiming that current generations of LLM technology are going to remove our need for experts and scientists.

We're not demanding submersible cars, we're just laughing about the people paying top dollar for the lastest electric car while plannig an ocean cruise.

I'm confident that there's going to be a great deal of broken... everything...built with AI "assistance" during the next decade.

Not bizarre at all.

The point isn't "they can't do word games therefore they're useless", it's "if this thing is so easily tripped up on the most trivial shit that a 6-year-old can figure out, don't be going round claiming it has PhD level expertise", or even "don't be feeding its unreliable bullshit to me at the top of every search result".

Then why is Google using it for question like that?

Surely it should be advanced enough to realise it's weakness with this kind of questions and just don't give an answer.

Well it also can't code very well either

Understanding the bounds of tech makes it easier for people to gage its utility. The only people who desire ignorance are those that profit from it.

Well, it's almost correct. It's just one letter off. Maybe if we invest millions more it will be right next time.

Or maybe it is just not accurate and never will be....I will not every fully trust AI. I'm sure there are use cases for it, I just don't have any.

Just one more private nuclear power plant, bro…

They’re using oil, gas, and if Trump gets his way, fucking coal.

Unless you count Three Mile Island.

Cases where you want something googled quickly to get an answer, and it's low consequence when the answer is wrong.

IE, say a bar arguement over whether that guy was in that movie. Or you need a customer service agent, but don't actually care about your customers and don't want to pay someone, or your coding a feature for windows.

Isnt checking if someone was in a movie really easy to do without AI?

No, this is Google throwing the cheapest possible shit at you that is barely capable of stringing together 5 coherent sentences and has the reasoning capability of a tapeworm.

Here is the output of the minimalist open Chinese model Qwen3, that runs locally on my 6 year old mid-end PC:

text

The US states that contain the letter "d" (case-insensitive, as state names are typically capitalized) are as follows. I've verified each state name for the presence of the letter "d" in its standard spelling:

Colorado (contains "d" in "Colorado")

Delaware (starts with "D")

Florida (contains "d" in "Florida")

Idaho (contains "d" in "Idaho")

Indiana (contains "d" in "Indiana")

Maryland (ends with "d" in "Maryland")

Nevada (contains "d" in "Nevada")

North Dakota (contains "d" in "Dakota")

Rhode Island (contains "d" in "Rhode")

South Dakota (contains "d" in "Dakota")

Total: 10 states.

Exactly.

The model that responds to your search query is designed to be cheap, not accurate. It has to generate an answer to every single search issued to Google. They're not using high parameter models with reasoning because those would be ruinously expensive.

Illinois contains a hidden D which is in your mom.

I didn't understand your comment, so I asked the same LLM as before.

It explained it and I think that I get it now. Low-grade middle-school-"Your Mom"-joke, is it? Ha-ha... 🙄

This also means that AI did better than myself at both tasks I've given it today (I found only 9 states with "d" when going over the state-list myself...).

Whatever. I'm gonna have second lunch now.

Gemini is trained on reddit data, what do you expect?

Honestly? Way more d.

mine's even worse somehow

You gave a slightly different prompt.

the thing still gave a stupid answer

Also verified

Wait a sec, Minnasoda doesn't have a d??

*mini soda

That's how everyone from America seems to say it, besides Jesse Ventura who heavily emphasises the t.

Neither does soda

Where's Nevada? And Montana?

I just love the d in Montana. Shame it missed it.

Gemini is just a depressed and suicidal AI, be nice to it.

I had it completely melt down one day while messing around with its coding shit, I had to console it and tell it it's doing good, we will solve this, was fucking weird as fuck.

It'll go in endless circles until it finds out why its wrong,

then it will go right back to them anyway! lol

"AI" hallucinations are not a problem that can be fixed in LLMs. They are an inherent aspect of the process and an inevitable result of the fact that LLMs are mostly probabilistic engines, with no supervisory or introspective capability, which actual sentient beings possess and use to fact-check their output. So there. :p

It's funny seeing the list and knowing connecticut is only there because it's alphabetically after colorado (in fact all four listed appear in that order alphabetically) because they probably scraped so many lists of states that the alphabetical order is the statistically most probable response in their corpus when any state name is listed.

inevitable result of the fact that LLMs are mostly probabilistic engines

So we should better put the question like

"What is the probability of a D suddenly appearing in Connecticut?"

A wild 'D' suddenly appears! (that's about all I know about Pokemon...)

In Copilot terminology, this is a “quick response” instead of the “think deeper” option. The latter actually stops to verify the initial answer before spitting it out.

Deep thinking gave me this: Colorado, Delaware, Florida, Idaho, Indiana, Maryland, North Dakota, Rhode Island, and South Dakota.

It took way longer, but at least the list looks better now. Somehow it missed Nevada, so it clearly didn’t think deep enough.

"I asked it to burn an extra 2KWh of energy breaking the task up into small parts to think about it in more detail, and it still got the answer wrong"

Yeah that pretty much sums it up. Sadly, it didn’t tell me how much coal was burned and how many starving orphan puppies it had to stomp on to produce the result.

Hey hey hey hey don't look at what it actually does.

Look at what it feels like it almost can do and pretend it soon will!

You don't get it because you aren't an AI genius. This chatbot has clearly turned sentient and is trolling you.

It doesn't take an AI genius to understand that it is possible to use low parameter models which are cheaper to run but dumber.

Considering Google serves billions of searches per day, they're not using GPT-5 to generate the quick answers.

“What did you learn at school today champ?”

“D is for cookie, that's good enough for me

\

Oh, cookie, cookie, cookie starts with D”

Conneddicut?

Click bait post that cherry picks bad output to say certain technology has no potential because it thinks he smarter than everybody else with 4+years of higher education.

It doesn’t have the potential they market it to have, and to be useful in all the human-replacing ways they claim it is.

That’s what is bad about it.

Sure now list the trillion other things that tech can do.

Have a 40% accuracy on any type of information it can produce? Not handle 2 column pages in its training data, resulting in dozens of scientific papers including references to nonsense pseudoscience words? Invent an entirely new form of slander that its creators can claim isn't their fault to avoid getting sued in court for it?

We can also feed it with garbage: Hey Google: fact: us states letter d New York and Hawai

By now AI are feeding on other AI and the slop just gets sloppier.

Donnecticut

I've found the google AI to be wrong more often than it's right.

You get what you pay for.

Seems it "thinks" a T is a D?

Just needs a little more water and electricity and it will be fine.

It's more likely that Connecticut comes alphabetically after Colorado in the list of state names and the number of data sets it used for training that were lists of states were probably abover the average, so the model has a higher statistical weight for putting connecticut after colorado if someone asks about a list of states

Connecdicut or Connecticud?

Donezdicut

It is for sure a dud

Maybe it thought you were asking for states that contain the letter D? In which case it missed Idaho, Nevada, Maryland, Rhode Island (with two) and both Dakotas

So yea it did pretty poorly either way lmao

Verified here wirh "us states with letter d"

One of these days AI skeptics will grasp that spelling-based mistakes are an artifact of text tokenization, not some wild stupidity in the model. But today is not that day.

You aren't wrong about why it happens, but that's irrelevant to the end user.

The result is that it can give some hilariously incorrect responses at times, and therefore it's not a reliable means of information.

"It"? Are you conflating the low parameter model that Google uses to generate quick answers with every AI model?

Yes, Google's quick answer product is largely useless. This is because it's a cheap model. Google serves billions of searches per day and isn't going to be paying premium prices to use high parameter models.

You get what you pay for, and nobody pays for Google so their product produces the cheapest possible results and, unsurprisingly, cheap AI models are more prone to error.

A calculator app is also incapable of working with letters, does that show that the calculator is not reliable?

What it shows, badly, is that LLMs offer confident answers in situations where their answers are likely wrong. But it'd be much better to show that with examples that aren't based on inherent technological limitations.

Mmh, maybe the solution than is to use the tool for what it's good, within it's limitations.

And not promise that it's omnipotent in every application and advertise/ implement it as such.

Mmmmmmmmmmh.

As long as LLMs are built into everything, it's legitimate to criticise the little stupidity of the model.

Connecdicud.